Most executives have experimented with AI in some form by now, whether that means generating a summary, drafting a memo, or running a quick analysis. What remains uncommon is using it as a structured part of how consequential decisions actually get made, not as a shortcut, but as a layer of discipline that improves the quality of judgment before the call gets made. This piece is for leaders who want to move past experimentation and understand what AI decision making looks like when it is applied seriously at the executive level.

Why good leaders still make bad decisions

Senior leaders make a lot of decisions, and most of them are not hard in isolation. The problem is volume, speed, and cognitive load accumulating across a day, so that by the time a genuinely consequential call arrives, the mental resources available to reason through it carefully are already depleted.

Research from McKinsey Global Institute found that executives spend around 37% of their working time on decisions that could be partially systematized, which means that time competes directly with the strategic calls that actually require a CEO’s full attention. Beyond the time problem, there is the bias problem, and unlike time pressure, cognitive biases are structural rather than situational. Availability bias means the most recent or memorable event carries more influence than it deserves in the analysis. Anchoring bias makes it difficult to revise an early estimate once it has landed on the table. Groupthink compounds both when no one in the room is willing to say the uncomfortable thing out loud, which in high-stakes decisions tends to be precisely when it matters most.

None of this reflects a character flaw. It is what happens when experienced people operate under pressure with incomplete information, and the question worth asking is whether the process around a decision is designed to compensate for these dynamics, or whether it simply hopes they do not surface.

Defining AI decision making in a corporate context

AI decision making at the executive level does not mean letting an algorithm make the call. It means using AI to improve the quality of analysis, challenge the logic, and stress-test the reasoning so that the human making the decision is working from a more rigorous foundation than they would otherwise have.

In practice, AI operates across three layers in a well-structured decision process. The first is signal extraction, where AI pulls from financial systems, customer data, market intelligence, and competitive filings simultaneously, surfacing what is actually relevant to the decision at hand rather than what happens to be in the most recent presentation.

The second is scenario modeling, where instead of evaluating two or three obvious options, AI generates a wider set of plausible outcomes, models the dependencies between variables, and quantifies what happens under different assumptions, which is where AI adds the most value that traditional analysis cannot match at the same speed.

The third is structured challenge, where AI is prompted to argue against the preferred direction, identify the strongest case for the alternative, and surface what has been underweighted in internal discussion, without the political capital and career dynamics that prevent honest dissent in most boardrooms.

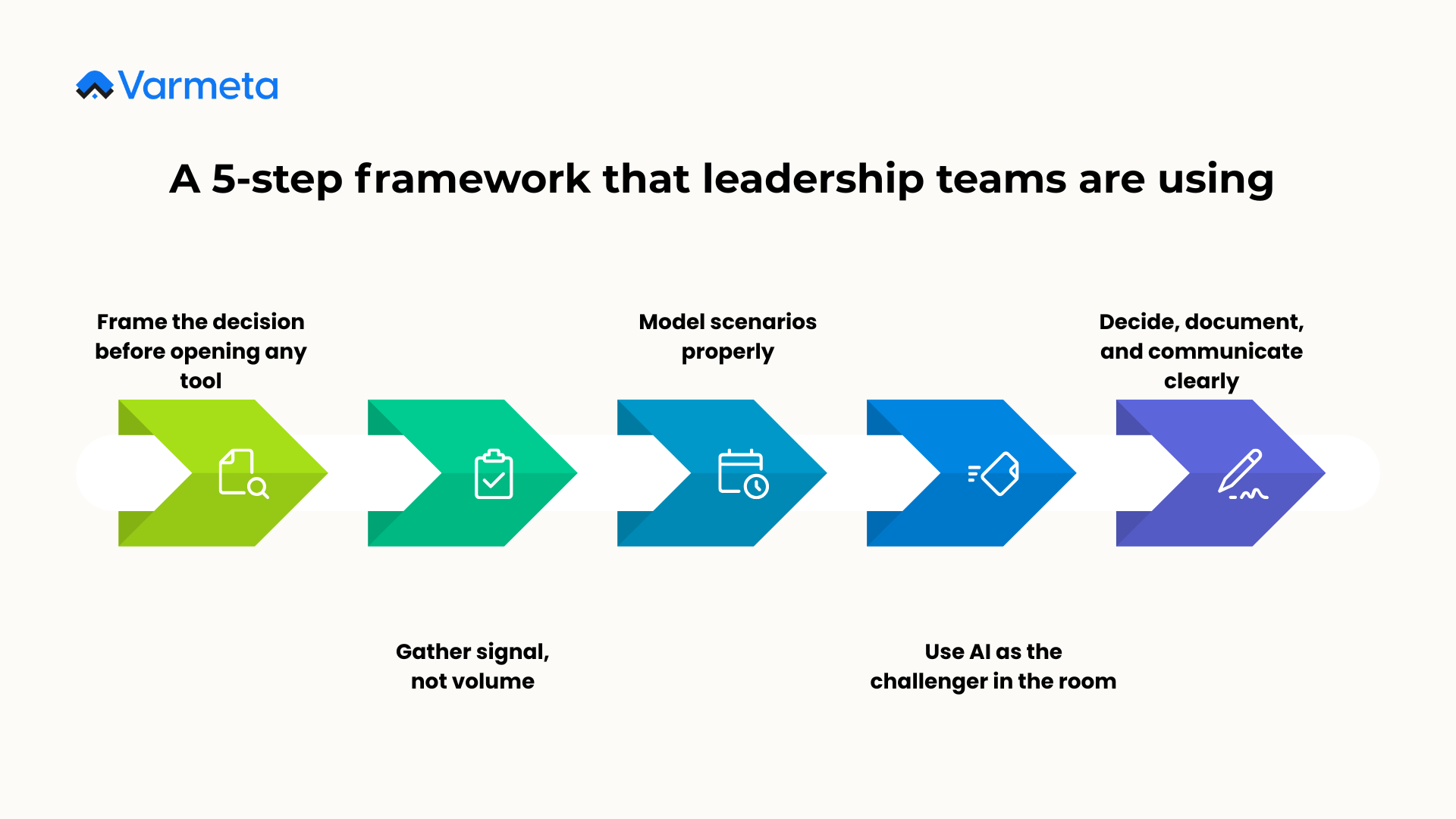

A 5-step framework that leadership teams are using

Step 1: Frame the decision before opening any tool

The quality of AI output depends almost entirely on the quality of the input, so before anything else, the decision needs a precise statement of what outcome is being decided, what the real constraints are, and what success looks like in measurable terms twelve months out.

A useful technique at this stage is to use AI to stress-test the problem statement itself, ask it to identify hidden assumptions, flag internal contradictions, and restate the question more precisely. This step alone regularly surfaces issues that would have derailed the analysis further downstream, and it takes less than thirty minutes to run.

In practice, the prompt is simple: “Here is the decision we are trying to make. What assumptions are we making that we have not stated explicitly? Where might the objectives conflict with each other? How would you restate this question more precisely?” The quality of what comes back will tell a leadership team quickly whether they are solving the right problem.

Step 2: Gather signal, not volume

More data is not the goal. Relevant data is, and AI can aggregate across disparate sources including financial models, customer data, regulatory context, and competitor intelligence, then filter out what does not bear directly on the defined decision.

The shift this enables is not about access, since most organizations already have the data, but about synthesis at a speed and breadth that human analyst teams cannot match manually. A team that previously needed a week to consolidate inputs from five departments can now have a synthesized brief in hours, with confidence levels annotated and contradictions flagged.

The practical starting point is to define the three to five questions that the decision actually hinges on, then task AI with pulling everything relevant to those specific questions rather than running a broad information sweep.

Step 3: Model scenarios properly

Most strategic decisions get evaluated against one or two obvious alternatives, which is not scenario planning so much as it is confirmation of a direction already chosen. AI can generate five or six well-constructed scenarios with modeled outcomes, dependencies, second-order effects, and risk profiles across the same time it previously took a team to build one. The reason most leadership teams skip this step has never really been about capability or intent, it has been about time, and that constraint is now largely gone.

Step 4: Use AI as the challenger in the room

Boardrooms have a well-documented problem with honest dissent. Seniority hierarchies, political dynamics, and the desire for alignment mean that critical challenges often do not get raised until after the decision has already been made, and by then the cost of reversing course is significantly higher.

AI, when properly prompted, does not have those constraints. A well-structured challenge prompt asks AI to do three specific things: make the strongest possible case against the preferred option, identify the two or three assumptions that are most likely to be wrong, and describe in concrete terms what a failure scenario looks like twelve months out. The output is not a reason to abandon the direction, it is the intellectual stress test that most boardroom conversations skip.

Beyond using AI as a challenger for individual decisions, leadership teams that get the most out of this step tend to do two things systematically. The first is running test use cases before applying AI to high-stakes decisions. This means selecting a lower-stakes decision that has already been made, running it through the AI challenge process, and comparing the output against what was actually decided and what actually happened. The gap between the two is usually instructive, and it builds the team’s intuition for where AI challenge is most valuable versus where human judgment consistently outperforms.

The second is training the team to use AI as part of their daily working process, not just for formal decisions. When senior leaders and their teams use AI regularly for smaller analytical tasks: drafting briefing notes, reviewing options, summarizing market data, they develop a practical literacy for how to prompt well, where AI tends to be reliable, and where it tends to produce confident-sounding output that does not hold up under scrutiny. That literacy is what makes the challenge process in high-stakes decisions actually work. Teams that only reach for AI when the stakes are high have not built the muscle memory to use it well.

Step 5: Decide, document, and communicate clearly

The decision belongs to the CEO, and so does the accountability for it. What AI changes is the quality and speed of documentation surrounding that decision, capturing the context, the alternatives considered, the criteria applied, the risks acknowledged, and the metrics that will determine whether it worked. Good documentation of this kind is not bureaucracy. It creates institutional memory that survives leadership transitions, gives the board a clear rationale to engage with, and provides the communication architecture for cascading the decision across the organization, all of which AI can produce in a fraction of the time these tasks would otherwise require.

How this plays out in real decisions

The framework above is useful in theory. What makes it credible is seeing how it applies to the kinds of decisions that actually sit on a CEO’s desk.

Crisis response

When a crisis hits, whether that is a product failure, a key executive departure, a regulatory action, or a reputational incident, the pressure to respond quickly almost always degrades the quality of the response. Leadership teams reach for the nearest available framing, communicate reactively, and often make commitments in the first 48 hours that they spend the following weeks walking back.

AI changes the economics of crisis preparation significantly. A well-structured AI process can generate stakeholder communication drafts across multiple channels simultaneously, model how different response postures are likely to land with different audiences, surface comparable incidents from other organizations and what the consequences of each response type turned out to be, and identify the second and third-order implications of each option before any public statement goes out. The CEO still decides the tone, the substance, and the timing, but they are doing so with a quality of analysis that previously required days and a large communications team to produce.

Mergers and acquisitions

M&A decisions are among the most consequential a CEO makes, and also among the most information-intensive. Due diligence processes generate enormous volumes of data across legal, financial, operational, and cultural dimensions, and the integration risk that ultimately determines whether a deal creates or destroys value is notoriously difficult to assess in the time available before signing.

AI can accelerate and deepen due diligence by synthesizing financial filings, identifying anomalies in reported figures, mapping the target’s competitive positioning against market data, and modeling integration scenarios under different assumptions about cost synergies, revenue retention, and organizational fit. More usefully, AI can be prompted to build the bear case for the acquisition explicitly, articulating the specific conditions under which the deal fails and what early warning signals would look like. Most M&A processes do not produce that kind of structured skepticism because no one whose career depends on the deal closing has an incentive to generate it.

Organizational and compensation decisions

Decisions about organizational structure, compensation benchmarking, and executive hiring are often made with less analytical rigor than capital allocation decisions of equivalent financial impact, largely because they feel more qualitative. AI closes that gap in practical ways. For compensation benchmarking, AI can aggregate market data across geographies, roles, and company stages faster than any manual research process. For organizational design, AI can model the downstream effects of reporting structure changes on decision speed, communication flow, and accountability clarity. For executive hiring, AI can pattern-match candidate profiles against the characteristics of high performers in similar roles at comparable companies, surfacing considerations that a panel interview process is unlikely to surface on its own.

Pricing and revenue strategy

Pricing decisions sit at the intersection of customer behavior, competitive dynamics, unit economics, and organizational risk tolerance, which makes them genuinely complex and often politically charged internally. AI can model the revenue and margin implications of different pricing architectures across customer segments, simulate competitive responses, and identify the scenarios where a pricing change accelerates churn versus those where it improves mix. What this enables for the CEO is a pricing conversation that is grounded in modeled outcomes rather than in whoever made the most confident argument in the room.

Where AI adds value and where it does not

| Decision type | Traditional approach | AI-augmented approach |

| Market expansion | Senior team workshop, analyst report, 2-3 weeks | TAM analysis, competitor mapping, regulatory scan in hours |

| Budget allocation | Historical precedent, internal lobbying, Excel models | Scenario modeling across 20+ variables with sensitivity analysis |

| C-suite hiring | Reference calls, panel interviews, assessments | Culture-fit pattern matching, team dynamics modeling, success profile comparison |

| Crisis response | War room, PR agency brief, reactive messaging over days | Scenario playbooks in hours, stakeholder comms drafted across multiple channels simultaneously |

| M&A due diligence | Manual document review, advisor-led analysis | AI synthesis of financials, anomaly detection, integration scenario modeling |

| Pricing strategy | Internal debate, selective market data, gut instinct | Multi-segment revenue modeling, competitive response simulation, churn sensitivity analysis |

When to use AI for decisions and when not to

One of the more practical questions for any leadership team is not how to use AI across all decisions, but how to identify which decisions actually benefit from it and which do not. The distinction matters because applying AI indiscriminately can create a false sense of rigor without improving outcomes, and in some cases it can actively make things worse by optimizing for what is measurable at the expense of what actually matters.

Decisions that benefit most from AI share a few characteristics: they involve multiple interdependent variables, they have sufficient historical data or comparable cases to draw from, the success criteria can be defined in concrete terms, and the cost of a wrong decision is high enough to justify the analytical investment. Market entry, capital allocation, pricing, and risk assessment tend to fit this profile well.

Decisions that benefit least tend to be ones where the primary inputs are relational, contextual, or values-based rather than analytical. Whether to part ways with a long-tenured leader who has become a cultural liability, how to respond to an employee relations situation that has no clean precedent, whether a proposed partnership aligns with what the organization actually stands for, these are decisions where AI can provide background context but should not be the primary input, because the judgment required is fundamentally human and the consequences of over-indexing on quantitative analysis can be significant.

The ways AI-assisted decisions go wrong

The real risks in AI-assisted decision making are not where most people look. The failure mode is rarely the algorithm — it is the data feeding it, the questions being asked of it, and the human behavior around it.

The first risk is data quality. If the underlying data is biased, incomplete, or lagging behind reality, AI will reflect those limitations with high confidence and well-formatted prose, which is arguably more dangerous than an obvious gap in the analysis because it looks authoritative even when the foundation is not.

The second is hallucination. Unlike data quality issues which can at least be traced back to a source, AI hallucination produces outputs that are internally coherent, professionally presented, and factually wrong in ways that are difficult to detect without domain expertise. In a decision context, a hallucinated market size figure or a fabricated regulatory precedent can move a room before anyone thinks to verify it. The practical safeguard is treating AI output the same way a good analyst treats a first draft, useful as a starting point, not a finished input.

The third, and least discussed, is asking the wrong business question in the first place. AI is exceptionally good at answering the question it is given. If that question is poorly framed, the output will be precise, well-reasoned, and pointed in the wrong direction. A company asking “how do we reduce customer service costs?” will get a very different set of AI-generated options than one asking “how do we resolve customer issues faster?” and the difference between those two framings can determine whether the resulting decision improves or damages the customer relationship. The discipline of question design matters more with AI than without it, because AI removes the friction that would otherwise slow down a bad question.

Beyond these three, there is the accountability question. When AI recommendations align with the decision taken, there is a temptation to treat the AI rationale as cover, but the CEO signed off and the CEO is accountable, and that does not change because the analysis was machine-generated.

There are also categories of decision where AI simply should not be the primary input. Choices about organizational values, culture, and what the company stands for require moral reasoning and contextual judgment that current AI systems do not have, and using AI to optimize for measurable outcomes on a question that is fundamentally about identity is not efficient. Finally, because AI can compress weeks of analysis into hours, it creates pressure to compress the deliberation phase at the same rate, but the human time required to sit with options, consult stakeholders, and let the implications settle serves a different function than the analysis phase, and those two things should not be conflated.

Why individual AI use is not enough

Individual capability with AI tools produces marginal gains, while embedding AI into how decisions get made at the governance level produces structural ones. In practice this means defining, by decision type, how AI is expected to contribute before anything reaches the executive table, so that for budget decisions, AI scenario models are submitted alongside the proposal; for market entry, AI-generated landscape analyses are part of the standard brief; and for talent decisions, AI-assisted pattern matching informs the shortlist without determining it. The outcome is a leadership team that consistently operates closer to the ceiling of the information available to it, rather than the ceiling of what a supporting team can prepare under time pressure.

Getting to that point requires more than individual willingness to adopt new tools. It requires infrastructure: the right platforms, data integrations, and workflow design that connects AI capabilities directly to the decision processes executives actually use. Most organizations underestimate this gap. The jump from “our team uses AI for some tasks” to “AI is embedded in how we govern decisions” is not a cultural shift alone. It is an architectural one, and it needs to be built deliberately.

This is exactly the problem Varmeta works on. Rather than deploying general-purpose AI tools and hoping adoption follows, Varmeta works with leadership teams to map their actual decision workflows, identify where AI augmentation creates the most leverage, and build the infrastructure that makes AI-assisted governance repeatable and scalable across the organization. The result is not a productivity tool sitting on someone’s laptop, it is a decision architecture that operates at the organizational level, where the compounding effect on quality and speed becomes visible within quarters, not years.

For organizations that are serious about moving from AI experimentation to AI-embedded decision making, the gap between those two states is primarily an execution and infrastructure problem. That is a solvable problem, and it is one worth solving sooner rather than later, because the organizations building this capability now are establishing an advantage that will be increasingly difficult to close from behind.

Frequently asked questions

1. What is AI decision making in a business context?

The structured use of AI tools to improve the quality of human judgment in organizations, through data synthesis, scenario modeling, and structured challenge, with the goal of producing better-informed human decisions rather than automated ones.

2. Does AI decision making replace executive judgment?

No. Decisions involving values, culture, accountability, and strategic direction remain human responsibilities, and AI’s role is to ensure those decisions are made with better evidence and more rigorous analysis, not to make them.

3. Which decisions benefit most from AI support?

High-complexity decisions with multiple interdependent variables, including market expansion, capital allocation, pricing strategy, M&A due diligence, and crisis response, benefit most. Decisions that are primarily relational or values-based benefit less and require more caution about over-reliance on AI input.

4. How do you stop AI from just validating what you already think?

The short answer is adversarial prompting, which means explicitly asking AI to argue against the preferred direction, find the strongest case for the alternative, and surface what the internal discussion has underweighted. Most AI output that simply validates existing assumptions does so because the question was framed to invite agreement, not because the model cannot do otherwise.

5. What is the most common mistake in enterprise AI adoption for decisions?

Treating it as a technology deployment rather than a process redesign. Deploying AI tools without changing how decisions are governed and documented produces marginal efficiency gains at best, while the organizations that see structural improvement are those that redesign the decision process itself around what AI can and cannot do well.

6. Is this relevant for companies that are not large enterprises?

Yes, and often more so, because smaller leadership teams lack the analyst infrastructure that large organizations rely on. AI provides access to the same analytical depth without the headcount, which meaningfully changes the economics of good decision making for growth-stage companies.

7. How do you measure whether AI-assisted decision making is actually working?

The most reliable indicators are decision cycle time, the number of scenarios evaluated before a major call is made, and the quality of documentation that comes out of the process. Over a longer horizon, the track record of decisions made with AI support versus those made without it tends to become visible in outcomes, though isolating the AI contribution specifically requires some discipline in how the process is structured and recorded from the start.

Conclusion

The leaders who will get the most from AI are not the ones who use it the most. They are the ones who use it in the right places, for framing, scenario modeling, honest challenge, and disciplined documentation, none of which replaces judgment but all of which sharpen the conditions under which judgment gets exercised. The practical starting point is to pick one recurring decision that costs your team significant time and analysis effort, build an AI-assisted process around it, and measure what changes. The results will make the next step obvious.

For organizations looking to build that kind of AI infrastructure seriously, from the data layer through to the decision workflows that executives actually use, Varmeta works with leadership teams on exactly this problem.