The digital world is evolving faster than ever, but so are the threats lurking within it. Today, traditional security measures are struggling to keep up with sophisticated hackers who use automation to strike at lightning speed. This creates a massive “security gap” where human teams are overwhelmed by data and emerging vulnerabilities. To survive, organizations must turn to artificial intelligence in security to transform their defense from reactive to proactive.

In this guide, you will discover how AI acts as both a shield and a brain for your network. We explore the critical balance between leveraging AI for protection and securing the models themselves from manipulation.

What is AI in Security and Why Does It Matter Today?

Artificial Intelligence in security is the use of smart technology, like algorithms that learn from data, to protect digital systems and networks. In simple terms, it acts like a digital brain that never sleeps, constantly watching for “weird” behavior that might indicate a hack or a virus.

While traditional security only recognizes threats it has seen before, AI can predict and stop new, unknown attacks by recognizing patterns. It matters today because cyberattacks have become too fast and too complex for humans to handle alone; we need the speed of machines to fight the scale of modern threats.

The following table breaks down the core elements that make this technology work:

| Component | Simple Function | Role in Security |

| Machine Learning (ML) | Learning from experience | Establishing a “normal” baseline of behavior to flag unusual activity like unauthorized logins. |

| Deep Learning (DL) | Mimicking the human brain | Analyzing massive, complex datasets to detect hidden anomalies and power advanced facial or voice recognition. |

| Natural Language Processing (NLP) | Understanding human language | Allowing security teams to talk to systems in plain English to get instant reports or threat summaries. |

| Generative AI | Creating new content | Helping analysts write code for fixes or simulating “what-if” attack scenarios to test defenses. |

How is Artificial Intelligence Transforming Modern Cybersecurity?

Proactive Threat Detection and Pattern Recognition

AI identifies “zero-day” vulnerabilities before hackers can exploit them. It does not wait for a known virus signature. Instead, it monitors networks for unusual behaviors signaling a new attack. This stops unknown threats before software providers even release a patch.

AI analyzes massive datasets faster than any human can. It studies real-time traffic to learn what “normal” activity looks like. When a small anomaly appears, the AI instantly flags it. This catches hidden threats that human teams often miss.

Automated Incident Response (SOAR)

AI integration with SOAR platforms drastically reduces the time to respond. It automates routine tasks and complex investigation workflows. This speeds up decision-making for security teams. Organizations using AI contain breaches much faster than those without it.

During an attack, AI acts instantly to isolate infected endpoints. It works in real-time without needing manual human intervention. AI-enhanced tools monitor devices and cloud apps constantly. It blocks malicious traffic and disconnects assets to stop the spread.

Predictive Behavioral Analytics

AI uses Behavioral Analytics (UEBA) to track internal security. It creates profiles of how users and devices typically behave. If a user tries to access unauthorized files, the system flags it. It can force a password reset automatically to stop insider threats.

AI also predicts future attack vectors by studying global threat intelligence. It analyzes past attacks to find trends and high-risk areas. This helps organizations patch vulnerabilities before they are targeted. By using global knowledge, AI adapts to new threats proactively.

What is the Difference Between AI for Security and Securing AI?

The main difference between AI for Security and Securing AI is that the former uses artificial intelligence to defend digital assets, while the latter protects the AI models themselves from being compromised. In particular, AI for Security acts as a defensive tool for the entire network, whereas Securing AI serves as armor for the specific algorithms and data pipelines.

AI for Security (The Shield)

This involves applying intelligent technologies to safeguard an organization’s infrastructure and data from external threats. AI acts as a shield by identifying risks and managing responses at high speeds. It processes vast datasets to find hidden dangers that human teams might overlook.

Leading platforms demonstrate how this technology defends modern environments:

- Microsoft Security Copilot: Uses generative models to help analysts simplify complex data and resolve incidents quickly.

- Fortinet FortiAI: Functions as a digital analyst that detects and classifies threats in real-time using machine learning.

Securing AI (The Armor)

This focuses on defending the integrity of AI components, including their training data, models, and core algorithms. As hackers target intelligence systems, this armor ensures the AI remains reliable and safe. It prevents attackers from manipulating the system to produce harmful results.

Key strategies for protecting these systems include:

- Adversarial Defense: Preventing hackers from using deceptive inputs to trick the model into making wrong decisions.

- Anti-poisoning measures: Safeguarding training sets to ensure no one corrupts the data to change how the AI learns.

- Supply chain security: Protecting third-party libraries and external sources used during the AI development process.

- Lifecycle integrity: Monitoring the entire build process to block malicious code injections and ensure model quality.

What are the Main Security Risks and Vulnerabilities of AI Systems?

While AI provides powerful defense capabilities, it also introduces a new set of unique vulnerabilities that hackers can exploit. Understanding these threats is the first step in building a resilient system that can withstand modern digital attacks.

Data Poisoning and Input Manipulation

Data poisoning happens when hackers secretly tamper with the information used to train an AI. By feeding the system corrupted data, they change how the model behaves at its core. This ruins the AI’s reliability and accuracy before it ever starts working.

Input manipulation, or adversarial attacks, targets the AI after it is already trained. Attackers subtly change the data they feed the model to trick its algorithms. This forces the AI to make wrong predictions, allowing hackers to bypass security undetected.

Prompt Injection in Generative AI

Prompt injection is a major risk for Large Language Models (LLMs) and generative tools. Attackers send specially crafted text to trick the AI into ignoring its safety rules. By exploiting natural language, they force the model to perform forbidden or harmful actions.

These bypass attacks can make the AI generate malicious code or delete important files. They can also trick the system into ignoring access controls. This vulnerability turns a helpful assistant into a tool for cybercriminals.

Model Evasion and Privacy Leaks

Model evasion occurs when attackers change their digital footprint to dodge an AI’s detection. They modify their input data so the system fails to recognize their malicious intent. This allows them to influence the AI’s decisions to their advantage.

Privacy leaks happen when hackers extract sensitive data memorized by the AI during training. They use reverse engineering to steal secret algorithms or model weights. Attackers can also trick the AI into revealing private personal information or confidential company records.

How Can Organizations Implement AI Security Best Practices?

Building a resilient defense requires more than just installing software; it demands a strategic approach to artificial intelligence in security. By following these industry-proven best practices, organizations can maximize the benefits of AI while minimizing its inherent risks.

Establishing a Governance and Ethical Framework

Organizations must build robust governance and ethical frameworks to manage unique AI risks. A foundational practice is aligning policies with global standards like the NIST AI Risk Management Framework. This helps teams systematically evaluate and formalize security controls for all AI deployments.

Securing AI also requires defining clear “Human-in-the-loop” protocols for critical decision-making. AI should not be the final authority in high-stakes scenarios where biased data could lead to unfair consequences. Instead, organizations should ensure AI outputs are validated by human oversight and judgment.

Data Hygiene and Model Auditing

An AI system’s reliability depends entirely on the quality and integrity of its underlying data. Organizations must continuously monitor and test models for “drift,” decay, and bias. Identifying these shifts early prevents adversaries from exploiting weakened models to manipulate system outputs.

Strict data governance is also needed to secure training environments and machine learning pipelines. Protecting the entire development lifecycle, including third-party libraries, is critical to preventing data poisoning. This stops cybercriminals from feeding the system corrupted data to secretly alter its behavior.

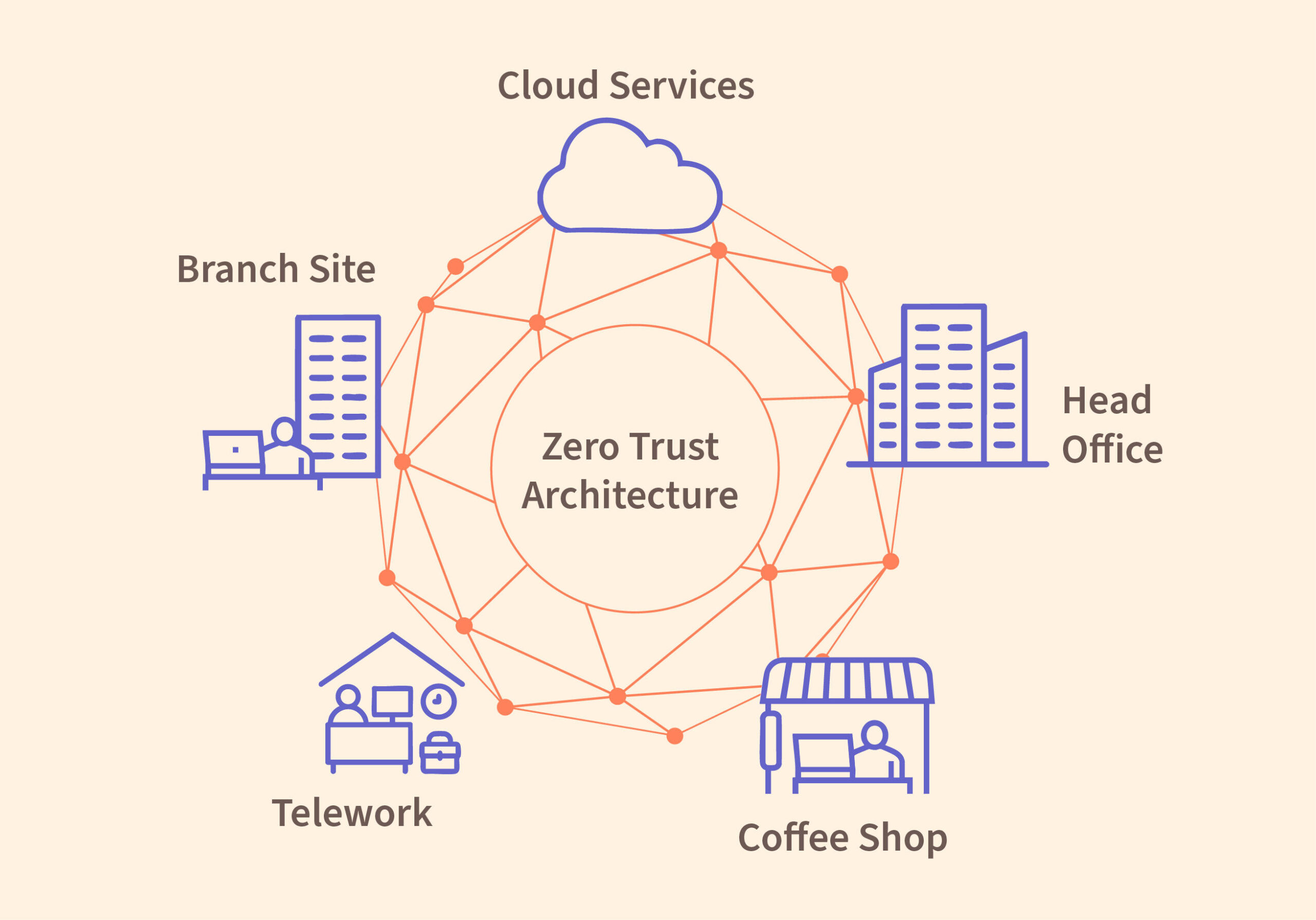

Adopting a Zero Trust Architecture for AI

Integrating new AI tools expands the attack surface, making a Zero Trust approach essential. This involves applying strict identity verification and “never trust, always verify” rules to both humans and AI agents. Security teams must move beyond simple perimeter defenses to protect sensitive data.

To safely use autonomous AI, organizations should establish runtime guardrails for all AI agents. Best practices include using centralized gateways to control supply chains and monitoring agent behavior for anomalies. Restricting AI access to sensitive environments helps prevent prompt injections and unauthorized data theft.

The Future Hold for AI in the Security Industry

The landscape of digital defense is shifting from manual monitoring to a high-speed battle between intelligent systems. As artificial intelligence in security matures, it will create a world where defense is measured in seconds rather than months.

The Rise of Hyper-Automation

The industry is moving toward hyper-automation, where autonomous AI systems manage high-volume tasks and evolve alongside new threats. Organizations are now using AI agents in Security Operations Centers (SOCs) to triage alerts and handle incident responses automatically. This shift saves massive amounts of human time and significantly reduces financial risk.

The data supporting this shift is overwhelming:

- Market growth: The AI security market is expected to grow from USD 20 billion in 2023 to over USD 141 billion by 2032.

- Faster response: AI-driven tools help organizations contain data breaches 108 days faster than traditional methods.

- Cost savings: Using AI can reduce the average cost of a data breach by USD 1.76 million.

- Efficiency: Some platforms claim to resolve threats 99% faster than manual incident response.

However, attackers also use this automation. An AI agent recently found and exploited a “zero-day” vulnerability in a secure system in just four hours. This highlights the escalating speed of modern AI-driven threats.

AI-Powered Social Engineering

Threat actors are weaponizing generative AI to launch highly sophisticated and personalized social engineering attacks. Large Language Models (LLMs) allow criminals to create convincing phishing campaigns that lack the grammatical errors of the past. These attacks can impersonate high-profile executives at an unprecedented scale.

Attackers also use deepfake audio and video to simulate real individuals, tricking employees into granting access to critical systems. The impact is clear: 75% of security professionals report an increase in attacks, with 85% blaming the rise on bad actors using generative AI.

Collaboration Between Human Intelligence and Machine Speed

The future focuses on “Human-Machine Teaming,” which augments human analysts rather than replacing them. While AI processes vast datasets at machine speed, human expertise is essential for high-level strategy and complex problem-solving. This partnership provides two main advantages:

- Bridging the skills gap: AI translates complex data into plain language, helping junior analysts perform advanced tasks. This is vital since there are over 700,000 unfilled cybersecurity jobs in the U.S. alone.

- Shifting to proactive defense: By offloading repetitive tasks to AI, human workers can focus on proactive threat hunting. AI handles the data overload, while humans focus on critical, ethical decision-making.

Conclusion: Securing the Future with Varmeta

Adopting artificial intelligence in security is a practical shift toward more durable digital defense. As technical threats become faster and more automated, relying solely on manual oversight is no longer sustainable. The key to success lies in integrating smart tools while maintaining strong human governance and protecting the AI models themselves. By focusing on these core strategies, businesses can navigate the evolving landscape with clarity and confidence.

Want to strengthen your defense? Connect with Varmeta for professional insights on building secure, AI-ready infrastructure tailored to your needs.

FAQs

How is AI used in security?

AI is primarily used to analyze massive amounts of data in real-time to identify patterns that signal a cyberattack. It automates threat detection, predicts potential vulnerabilities, and powers rapid incident response tools. By learning from historical data, it can spot “zero-day” threats that traditional software might miss.

What are the 4 types of data security?

In the context of protecting systems, the four main pillars are:

- Encryption: Encoding data so only authorized parties can read it.

- Data masking: Hiding specific data elements (like credit card numbers) within a database.

- Data resiliency: Ensuring data can be recovered after a breach or hardware failure via backups.

- Access control: Restricting who can view or use sensitive information based on their identity and role.

What is the future of AI in security?

The future points toward “Hyper-automation” and “Human-Machine Teaming.” We will see autonomous security centers that resolve most threats without human help, while human experts focus on high-level strategy. However, we also expect more sophisticated AI-powered phishing and deepfake attacks from hackers.

Can AI help prevent insider threats?

Yes, AI uses User and Entity Behavior Analytics (UEBA) to establish a baseline of what “normal” employee behavior looks like. If an account suddenly tries to download unusual amounts of data or logs in from an unknown location at 3 AM, the AI can automatically flag the activity or lock the account to prevent data theft.

Is AI security expensive for small businesses?

While enterprise-level tools can be costly, many cloud-based security providers now offer AI-driven protection as part of their standard packages. For small businesses, the cost of an AI subscription is often much lower than the financial and reputational damage caused by a single data breach.