In the field of machine learning, fine-tuning has emerged as a powerful method for adapting pre-trained models to perform highly specialized tasks. Rather than building models from scratch, it leverages the foundational knowledge of pre-trained models and adjusts them to address specific requirements. This approach has revolutionized industries by enabling faster, more efficient deployment of AI solutions tailored to unique challenges.

The value of fine-tuning lies in its ability to significantly enhance performance with minimal data. For example, a study on product attribute extraction demonstrated how fine-tuning with just 200 samples increased model accuracy from 70% to 88%. This capability not only improves results but also reduces the cost and time associated with training models from the ground up.

As machine learning continues to evolve, fine-tuning is becoming an essential strategy for achieving domain-specific accuracy, making it a cornerstone for businesses and researchers aiming to maximize the potential of AI-powered applications.

What is fine-tuning?

Fine-tuning is a machine learning technique that adapts a pre-trained model to perform a specific task. Instead of training a model from scratch, it starts with an existing model that has been pre-trained on a large dataset and refines it using task-specific data. This process adjusts the model’s parameters to better fit the nuances of the new task, enabling it to deliver highly accurate results with minimal additional training.

How fine-tuning differs from pre-training

While pre-training focuses on teaching a model general patterns or knowledge from a large and diverse dataset, fine-tuning narrows its scope by honing in on task-specific patterns and requirements. For instance, a language model pre-trained on vast amounts of general text might be fine-tuned to specialize in legal document analysis or medical transcription, tailoring its outputs to the specific needs of the domain.

Key advantages

- Faster model adaptation

Fine-tuning significantly reduces training time because the model has already learned general features during pre-training. This allows organizations to quickly adapt AI solutions to their unique challenges. - Improved performance with task-specific data

By refining a pre-trained model with domain-specific datasets, fine-tuning enhances the model’s ability to handle specialized tasks with greater accuracy and precision. - Cost and resource efficiency

Training a model from scratch requires extensive computational resources and data. Fine-tuning, on the other hand, reuses pre-trained models, making it a cost-effective alternative that maximizes the use of existing knowledge.

In essence, fine-tuning bridges the gap between general-purpose AI and domain-specific expertise, enabling businesses and researchers to deploy customized, efficient, and accurate AI solutions.

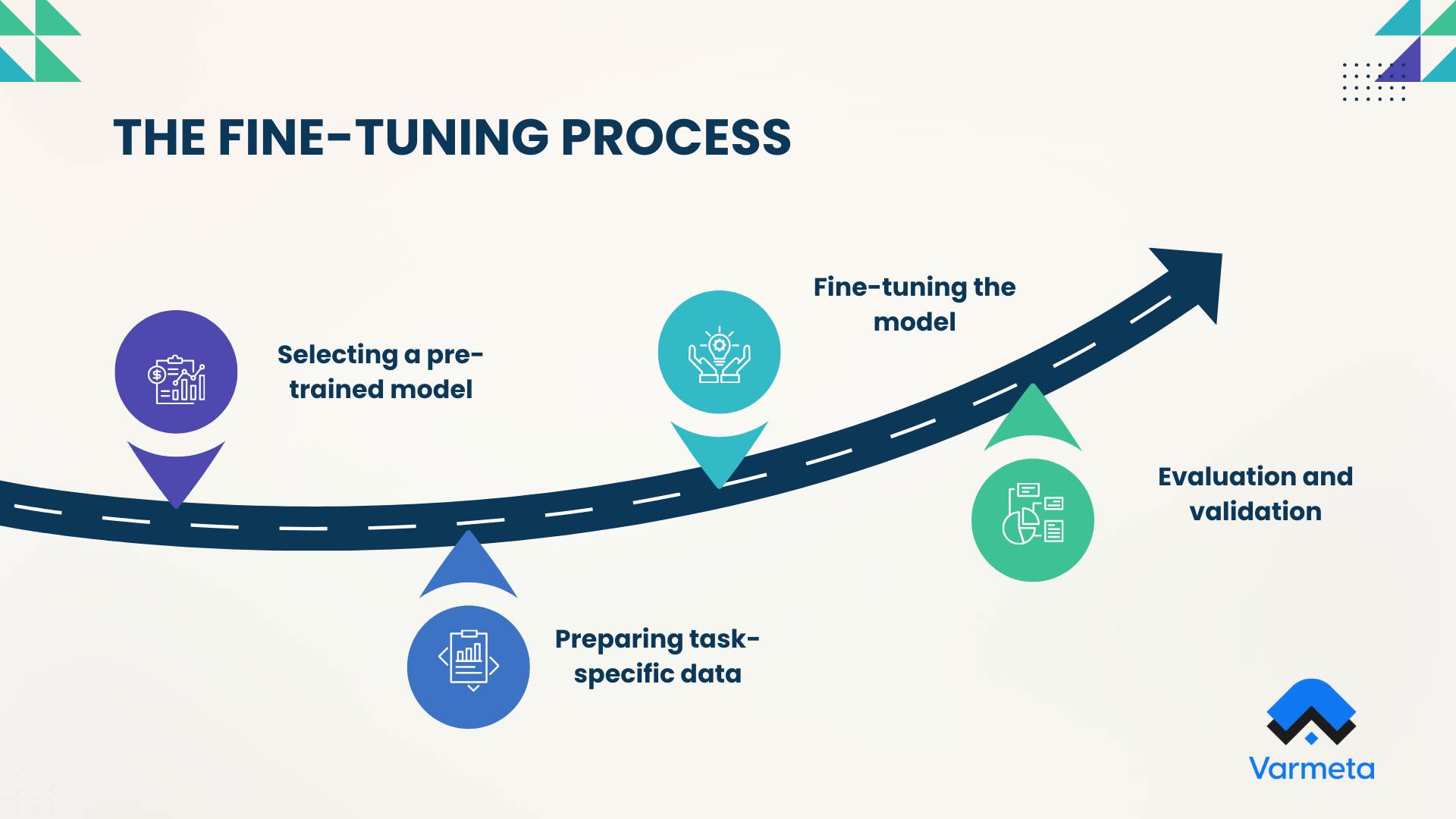

The fine-tuning process

Fine-tuning a model involves a structured series of steps that adapt a pre-trained model for a specific task. From selecting the right foundation to optimizing the final output, each phase plays a crucial role in achieving task-specific accuracy and performance.

1. Selecting a pre-trained model

The first step is to choose a pre-trained model that aligns with your task. Popular options include:

- BERT: Ideal for natural language processing (NLP) tasks like sentiment analysis or text classification.

- GPT (e.g., GPT-3, GPT-4): Best for generating coherent text, answering questions, or summarizing content.

- ResNet: Widely used for computer vision tasks such as image classification or object detection.

Factors to consider when choosing a model:

- Task relevance: The model should excel at tasks similar to your intended application.

- Size vs. resources: Larger models often perform better but require significant computational power.

- Community support and documentation: Well-documented models with active user communities can simplify fine-tuning.

2. Preparing task-specific data

High-quality data is the backbone of successful fine-tuning. This step involves:

- Data collection and cleaning: Gather relevant data for the specific task, ensuring it’s comprehensive and free from errors, duplicates, or irrelevant entries.

- Labeling and annotating: Accurately label the data to provide the model with clear examples of the desired output. For instance, tag sentiment in text data or label objects in images.

- Ensuring dataset quality: Ensure the dataset is diverse, balanced, and representative of the real-world scenarios the model will encounter. A biased or incomplete dataset can lead to poor performance or overfitting.

3. Fine-tuning the model

With a pre-trained model and task-specific data in hand, the next step is to fine-tune the model by adapting its architecture and parameters:

- Adjusting model architecture: Modify the model’s layers or output structure to align with the task. For example, replace the output layer to match the number of classes in a classification task.

- Optimizing hyperparameters: Experiment with learning rates, batch sizes, and training epochs to find the optimal configuration for training efficiency and performance.

- Avoiding overfitting: Use techniques like dropout layers, early stopping, and data augmentation to prevent the model from memorizing the training data and losing generalization capabilities.

4. Evaluation and validation

Once the model is fine-tuned, it’s crucial to evaluate its performance using reliable metrics:

- Performance metrics: Assess the model with task-relevant metrics such as accuracy, F1-score, precision, and recall. These metrics provide insights into how well the model performs across different dimensions of the task.

- Baseline comparisons: Compare the fine-tuned model’s results with baseline models to measure improvement. Baselines could include simpler algorithms or the unmodified pre-trained model.

- Cross-validation: Use cross-validation techniques to ensure the model’s performance is consistent across different subsets of the dataset.

Fine-tuning transforms a general-purpose pre-trained model into a task-specific powerhouse capable of delivering accurate, efficient, and meaningful results. By following these steps, businesses and researchers can leverage the power of AI to tackle complex, domain-specific challenges with precision.

Applications of fine-tuning for specific tasks

Fine-tuning allows pre-trained models to specialize in specific tasks across various industries, transforming general-purpose AI into domain-specific solutions. Here’s how it is making an impact in key areas:

Natural Language Processing (NLP)

Fine-tuning in NLP enables AI systems to understand and generate human-like language tailored to specific contexts. Applications include:

- Sentiment analysis: Identifying positive, negative, or neutral sentiments in customer reviews, social media posts, or surveys.

- Translation: Fine-tuned models like mBERT or MarianMT deliver more accurate translations for specific languages or industry contexts.

- Question answering: AI systems like ChatGPT can answer user queries based on fine-tuned knowledge, ideal for research or customer support.

- Chatbots and conversational AI: Fine-tuned conversational models power virtual assistants that provide relevant, context-aware responses in real-time.

Computer vision

Fine-tuning in computer vision enables models to handle complex tasks that require high accuracy in image analysis. Key applications include:

- Image classification: Identifying objects or categorizing images into predefined classes, such as detecting diseases in X-rays or recognizing product categories in retail.

- Object detection: Pinpointing specific objects within an image, such as vehicles in traffic management or defective items in manufacturing.

- Facial recognition: Fine-tuning enhances facial recognition systems for use in security, personalized marketing, or authentication.

Healthcare and life sciences

In healthcare, fine-tuning is critical for building highly specialized AI models that improve patient care and research. Examples include:

- Disease diagnosis: Analyzing medical images or patient data to detect conditions like cancer or diabetic retinopathy with higher accuracy.

- Drug discovery: Fine-tuned models accelerate research by predicting molecular interactions and identifying potential drug candidates.

- Medical image analysis: Models fine-tuned on MRI, CT, or ultrasound datasets help radiologists identify anomalies quickly and accurately.

Financial technology

The financial sector leverages fine-tuning to build models capable of handling sensitive data and making informed predictions. Use cases include:

- Fraud detection: Fine-tuned models analyze transaction patterns to flag fraudulent activities in real-time.

- Stock market predictions: AI systems predict stock prices or market trends by fine-tuning on historical financial data and economic indicators.

- Personalized recommendations: Financial platforms use fine-tuning to recommend investment strategies or credit products tailored to individual user profiles.

Industry-specific use cases

Fine-tuning adapts AI to the unique needs of various industries, enabling customized solutions:

- Retail: AI models fine-tuned for inventory management, product recommendations, or dynamic pricing.

- Manufacturing: Detecting defects on assembly lines or optimizing production processes through fine-tuned computer vision models.

- Customer service: Fine-tuned chatbots that understand industry-specific terminology, ensuring better customer interactions in sectors like travel, insurance, and telecom.

By fine-tuning pre-trained models, organizations can harness the power of AI to deliver highly accurate, efficient, and industry-relevant solutions, driving innovation and productivity across a wide range of applications.

The different types of fine-tuning

Fine-tuning can be approached in several ways, depending on the specific objectives and the nature of the task. Each method caters to distinct use cases, offering flexibility in adapting models for specialized applications.

Supervised fine-tuning

Supervised fine-tuning is the most widely used approach. In this method, the model is trained using a labeled dataset tailored to the target task, such as text classification or named entity recognition.

For example, in a sentiment analysis task, the model would be trained on a dataset of text samples labeled with their respective sentiments (e.g., positive, negative, neutral). By learning from these labeled examples, the model adapts to generate highly accurate predictions for the specified task.

Few-shot learning

When collecting a large labeled dataset isn’t feasible, few-shot learning offers an efficient alternative. This approach involves providing the model with just a few examples (or “shots”) of the task within the input prompt, enabling it to infer the task’s requirements with minimal fine-tuning.

For instance, a model tasked with classifying product reviews might be given a few labeled examples as part of its input, helping it understand the desired output without extensive retraining. Few-shot learning is especially useful for tasks with limited data availability.

Transfer learning

While all fine-tuning methods are a form of transfer learning, this specific approach involves repurposing a model trained on a general task to solve a different but related task. The idea is to leverage the knowledge the model has already acquired to address new challenges.

For example, a model pre-trained on a dataset of general text might be fine-tuned to identify legal terms and clauses in contracts. This approach uses the foundational understanding of language developed during pre-training to solve domain-specific problems with minimal additional effort.

Domain-specific fine-tuning

Domain-specific fine-tuning focuses on tailoring a model to handle content within a particular industry or field. The model is fine-tuned using text or data unique to the target domain, improving its ability to comprehend and generate relevant information.

For instance, a chatbot for a healthcare app would be fine-tuned using medical records, patient interactions, and clinical guidelines. This ensures that the model understands industry-specific terminology and provides accurate, context-aware responses tailored to healthcare users.

Each fine-tuning approach offers distinct advantages based on the task’s complexity, data availability, and domain-specific requirements. Whether it’s supervised fine-tuning for accuracy, few-shot learning for limited data scenarios, or domain-specific fine-tuning for specialized tasks, selecting the right method ensures that AI models are optimized for performance and relevance.

Benefits of fine-tuning for businesses

Fine-tuning offers businesses a powerful way to maximize the potential of AI while tailoring solutions to specific needs. It bridges the gap between pre-trained models and real-world applications, delivering significant advantages across industries:

Faster deployment of AI solutions

Fine-tuning leverages pre-trained models, reducing the time required to develop and deploy AI systems. Instead of training models from scratch, businesses can adapt existing models quickly to meet their specific goals.

Improved accuracy in domain-specific tasks

By focusing on task-specific data, fine-tuning enhances the precision and performance of AI systems in specialized areas, such as medical diagnosis, legal document analysis, or customer service chatbots.

Cost savings with pre-trained models

Training large AI models from scratch requires significant computational power and resources. Fine-tuning pre-trained models minimizes these costs, allowing businesses to invest more efficiently in data and infrastructure.

Scalability across projects and industries

Fine-tuned models can be easily adapted for multiple use cases within the same organization or industry, enabling businesses to scale AI capabilities across departments and projects without redundant efforts.

Challenges in fine-tuning

While fine-tuning offers numerous benefits, businesses must navigate several challenges to implement it successfully:

Overfitting on small datasets

Fine-tuning requires high-quality, task-specific data. When datasets are small or unbalanced, the model risks overfitting, meaning it performs well on the training data but poorly on new, unseen data. Addressing this requires careful regularization, data augmentation, and cross-validation.

Computational resource requirements

Despite being more efficient than training from scratch, fine-tuning still demands substantial computing power, especially for large models. Businesses must ensure they have access to sufficient GPU/TPU resources or scalable cloud infrastructure to support the process.

Balancing generalization and specialization

Fine-tuned models must strike a balance between retaining the broad knowledge from pre-training and specializing in the target domain. Over-specialization can limit the model’s versatility, while under-specialization may reduce its effectiveness in specific tasks. Careful hyperparameter tuning and validation are essential to maintain this balance.

Fine-tuning presents businesses with a cost-effective and efficient way to customize AI solutions, but it requires careful execution to overcome challenges. By addressing issues such as overfitting, resource demands, and the balance between generalization and specialization, organizations can fully unlock the potential of fine-tuning for their specific needs.

Conclusion

Fine-tuning has proven to be a critical strategy for optimizing AI models to excel in task-specific applications. By tailoring pre-trained models to meet unique requirements, businesses can achieve greater accuracy, efficiency, and relevance without the time and cost associated with building models from scratch. This makes fine-tuning an essential tool for industries ranging from healthcare and finance to retail and customer service.

For businesses looking to stay ahead in a rapidly evolving AI landscape, adopting fine-tuning practices offers a competitive edge. It allows organizations to deploy solutions that are not only faster and more efficient but also highly adaptable to changing demands and specialized tasks. Whether it’s improving customer interactions, enhancing decision-making, or automating complex processes, fine-tuning empowers AI to deliver scalable and impactful results.

As AI continues to advance, fine-tuning will play a pivotal role in bridging the gap between general-purpose models and real-world applications. It’s not just a method, it’s a pathway to innovation, precision, and transformative AI solutions that redefine what’s possible in technology. Now is the time for businesses to harness the power of fine-tuning and unlock their full potential in the AI-driven future.